These are the 7 key points this article covers on 5×5 risk assessment matrix:

- What a 5×5 risk assessment matrix is and what it fixes

- Why it fits audit-ready risk assessment

- How to define likelihood and impact scales (1 to 5)

- How to fill the cells without intuition

- How to convert scores into treatment plans

- How to maintain and review the matrix over time

- Common mistakes that can block progress

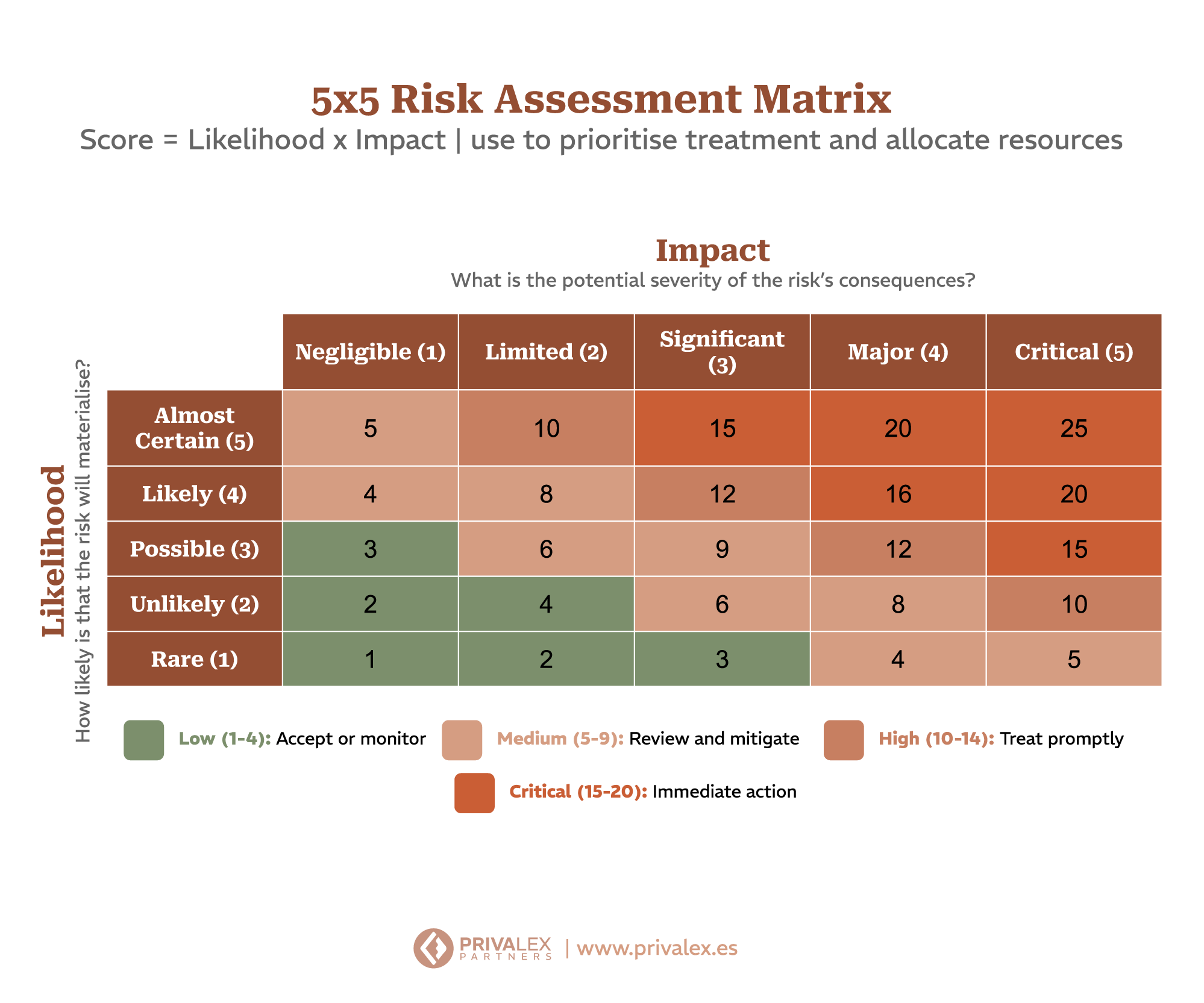

A 5×5 risk assessment matrix turns “we have risk” into repeatable decisions. It does this by shaping two variables. Likelihood and Impact.

And it creates a simple map to prioritize action.

What a 5×5 risk assessment matrix is (and when to use it)

A 5×5 risk assessment matrix is a visual way to value risks. It assigns values 1 to 5 for both likelihood and impact.

The intersection defines the risk level per cell. It is useful when you need:

- consistent assessments across teams,

- and a prioritization method that is understandable for leadership.

It is not magic. It is discipline.

A 5×5 matrix works only when it is connected to your process. In practice: you define criteria, you assess risks, you decide treatments, and you record evidence.

If any of those parts are missing, the matrix becomes a “nice picture” without governance value.

This approach fits well when you can describe risks as scenarios. For example: “a third-party change introduces an exploitable vulnerability” or “a user repeats an insecure access practice”.

If a risk is too abstract, it becomes hard to justify likelihood and impact consistently.

Before you fill cells, you need:

- assets in scope (systems, data, or processes),

- scenarios with relevant threats and vulnerabilities,

- and a list of existing controls (so likelihood is not based on gut feeling).

Why it aligns with ISO 27001 risk assessment expectations

ISO 27001 requires that risk assessment is consistent, documented, and reviewed. The core idea is to define risk assessment criteria and ensure evaluations remain comparable over time.

In practice, this means you cannot reinvent the scales for every evaluation. A 5×5 risk assessment matrix helps standardize how you rate likelihood and impact.

Auditors usually do not stop at the final score. They ask: “why is this value a 4 and not a 3?” and “how do you ensure the same criterion tomorrow?”.

A strong answer is traceability: criteria, assumptions, and evidence.

Your matrix must lead to action. If results never translate into treatments, owners, and review cycles, you lose the point of the exercise.

Design your matrix as a bridge between the risk register and your control plan. If you align your method with a certification journey, refer to obtain ISO 27001.

For background on ISO standards, you can start with ISO.

How to define likelihood and impact scales (1 to 5)

The goal is not to “add numbers”. The goal is that each number has a meaning you can justify. The practical rule: every value needs a short definition, an observable signal, and a simple example.

That prevents each evaluator from “filling with their experience” and breaking comparability.

Step 1: define likelihood criteria

Likelihood reflects how likely a scenario is. Instead of “high or low”, define observable signals: frequency, exposure, history, and existing controls.

For “likelihood 2”, define conditions such as:

- low frequency in history or strong barriers in existing controls,

- limited exposure by design (smaller surface, fewer privileges, more segmentation),

- and no relevant changes recently in the evaluated scope.

Step 2: define impact criteria

Impact reflects consequences for confidentiality, integrity, and availability. Define ranges from low-impact operational disruption to critical harm to services or data.

Impact definitions usually work better when you split them into:

- confidentiality (data exposure),

- integrity (unauthorized changes or loss of correctness),

- and availability (service outage or sustained degradation).

Then you translate each level into consequence ranges for your business.

Step 3: document the mapping between language and scale

If someone says “impact 4”, the reader should understand why. That creates traceability.

For each level, document briefly:

- what it means,

- what evidence supports it,

- and what you expect to happen if the scenario materializes.

How to fill a 5×5 risk assessment matrix without intuition

Filling cells is where bias appears. To reduce that risk, use a repeatable method.

A bias-resistant procedure for scoring

- Select the exact scenario and its scope (assets affected).

- List existing controls and the evidence that supports them (tests, logs, reviews).

- Assess likelihood using documented criteria and reference examples.

- Assess impact using defined ranges and the CIA link.

- Assign scores and apply the agreed calculation method.

- Record assumptions and short justifications so the result stays replicable.

1) Pair assets with threats and vulnerabilities

A cell is not filled for a generic “risk”. It is filled for a specific scenario affecting assets in your scope.

2) Evaluate with context, not opinion

Avoid deciding based on perception. Request evidence: current controls, recent changes, incidents, and operational signals.

If you lack enough evidence, the result should explicitly reflect uncertainty. You can still score, but your documentation must show what you could validate and what you could not.

3) Apply the same calculation rule every time

Whether you use multiplication (likelihood x impact) or cell-based assignment, define the method. Then apply it consistently across assessments.

How to convert scores into treatment plans

A matrix is not the plan. The matrix is a filter for prioritization. The next step is converting risk levels into actions and evidence.

How to decide tolerance without improvising

Define thresholds before you look at the matrix. For example: very high cells require immediate mitigation, medium cells need a follow-up plan, and low cells are handled via maintenance.

Then the discussion becomes about evidence and ownership, not about personal opinions.

Work example: one cell that becomes treatment and evidence

Imagine this scenario: “a third-party account gains access to a support system, and a change introduces an exploitable vulnerability”.

To assign likelihood 3, you rely on documented criteria and evidence: controls exist, but there is a change history with enough exposure time, and the validation control does not cover every variant.

To assign impact 4, you connect it to CIA: if it happens, confidentiality and integrity could be affected, and availability may degrade during incident recovery. The resulting cell becomes “high”. Under your tolerance, it lands in mitigation.

Now you define treatment fields:

- controls to apply (and why)

- responsible team

- target date

- verification mechanism (what evidence proves the control works)

- and review date.

When audit time arrives, you do not have to guess.

You can show the full chain: scenario -> score -> decision -> executed evidence.

Minimum register template (for audit and continuous improvement)

Keep at least:

- scenario and affected assets

- probability and impact criteria used

- resulting score and a short rationale

- tolerance decision (accept/mitigate/transfer)

- treatment plan (controls, owners, dates)

- expected evidence and how it will be verified

- and the next review date or trigger by change/incident.

Step 1: classify risk by tolerance

Define thresholds: which cells you accept, which you mitigate, and which require immediate action.

Step 2: define controls and owners per treatment

For prioritized risks, specify:

- which controls to apply,

- who is responsible,

- and when you will review effectiveness.

Step 3: align with your management system artifacts

ISO 27001 expects alignment between risk results and documentation such as the SoA and treatment rationale. Traceability matters.

How to keep the 5×5 risk assessment matrix reviewed

A matrix that is never reviewed becomes a history file. Audits typically expect consistency between plan and reality.

Review the matrix when there are:

- changes in systems or processes,

- incidents with lessons learned,

- and changes in scope.

Also schedule periodic reviews to support continuous improvement.

A realistic review cadence with triggers

You do not need daily reviews. What matters is that reviews happen when the context changes and when risk “learns” from incidents, near-misses, and control effectiveness checks.

Many teams start with a formal annual review and micro-updates after relevant changes or after tabletop exercises.

Quality gate before you approve updates

Before you approve changes, verify:

- consistency of definitions (likelihood and impact did not shift silently)

- coherence between scoring and available evidence

- and consistency of decisions (what you mitigated should not disappear without justification).

Versioning and change control

To keep traceability, version your matrix. Every relevant change should explain what changed, why it changed, and which scenario/date it applies to.

That prevents “the same cell” from meaning different things across time.

Cross-team calibration

To keep the matrix consistent, calibrate interpretation. Start with a short workshop: pick a few realistic scenarios and ask multiple teams to score them using the same criteria.

Then compare results and adjust definitions if the gap comes from unclear wording, not from evidence quality. Keep a simple calibration log that captures where teams disagreed and what evidence settled it.

That makes future sessions faster and prevents drift.

Common mistakes that can block progress

Using the 5×5 risk assessment matrix without documented criteria

If “likelihood 3” is undefined, the matrix becomes just a visual. Inconsistencies are easy to spot during review. That is the difference between having a matrix and having a control.

Letting different teams interpret the same cell differently

If interpretation differs, risk levels are not comparable. Your evidence set should reflect calibration: same scenario, same criteria, comparable scores.

That weakens consistency across time.

Keeping the matrix static even when the risk changes

If the context changes but the matrix does not, the method contradicts operations. The matrix becomes a snapshot rather than a living control.

That creates paper-first governance.

Not linking scores to treatment plans and evidence

Without treatment and decision records, the matrix does not guide action. In that case, the control story is incomplete for audit.

Documentation becomes disconnected from the management system.

How PrivaLex can help with 5×5 risk assessment matrix

At PrivaLex, we help you design a risk assessment process that is consistent, documented, and usable by teams. We support:

- defining criteria and scales per scope,

- turning scores into treatments with owners,

- and keeping traceability ready for audit conversations.

We also help you turn the matrix into a management asset: shared templates, clearer assumptions, and a review flow the team can sustain.

In practice, we help you prepare the “audit package” that connects scoring to treatments and evidence, so you can answer regulator questions quickly and consistently.

Schedule a strategic session with PrivaLex and review your starting point.

Frequently Asked Questions (FAQs)

It is a 1-to-5 likelihood and impact scoring approach that helps prioritize and keep assessments consistent across evaluators. In audits, the key is not “using 5×5 for its own sake”, but proving the method is repeatable, defensible, and connected to treatment and evidence.

By documenting criteria with observable signals: control maturity, incident history, and consequence ranges for your assets. To keep comparability, avoid vague definitions.

Each number should be explainable in one short statement and backed by observable signals or ranges.

ISO 27001 does not force one single representation. The key is consistency and documented criteria. A 5×5 matrix can be a practical option.

If your current approach already yields consistent results, you can keep it. The matrix only helps if it increases homogeneity and traceability of decisions.

Use it to prioritize under your risk tolerance, then define controls, owners, timelines, and review mechanisms as evidence of execution. Treatments must include management fields: an identifiable owner, a target date, and a verification method that shows the control reduced the risk.

At least according to your review schedule and whenever the context changes. Incidents and relevant change events typically trigger an update.

In practice, “extra” reviews should be triggered by real learning: incidents, near-misses, scope changes, and changes in control effectiveness.

Keep the methodology and criteria, the calculated results, the link to treatments, and references in your management system documentation. That allows you to demonstrate consistency and maintenance.

The auditor should be able to follow the thread: scenario evaluated, score assigned, decision made, and evidence showing execution.

Free webinar; 20 of May: Get audit-ready for NIS2, ISO 27001 and ENS with PrivaLex & Factorial IT.

View webinar